We do not know the distribution of the weather before it happens. Let’s assume we are reporting Tokyo’s weather to New York, and we want to encode the message into the smallest possible size. However, what does it mean to estimate the entropy?Īnswering these questions naturally yields in cross-entropy. So, we would need to estimate the probability distribution. If we do not know the probability distribution, we can not calculate the entropy. In short, the entropy tells us the theoretical minimum average encoding size for events that follow a particular probability distribution.Īs long as we know the probability distribution of anything, we can calculate the entropy for it. People use all these notations interchangeably, and we see them used in different places when we read articles and papers that use entropy concepts. The entropy is also written using H as follows:

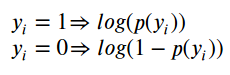

X~P means that we calculate the expectation with the probability distribution P. So, the above formula can be re-written in the expectation form as follows: In both discrete and continuous variable cases, we are calculating the expectation (average) of the negative log probability which is the theoretical minimum encoding size of the information from the event x. Here, x is a continuous variable, and P(x) is the probability density function. The term i indicates a discrete event that could mean different things depending on the scenario you are dealing.įor continuous variables, it can be written using the integral form: We assume that we know the probability P for each i. The entropy of a probability distribution is as follows: My article Entropy Demystified should help you understand the entropy concept in case you are not already familiar with it.Ĭlaude Shannon ( ) defined the entropy to calculate the minimum encoding size as he was looking for a way to efficiently send messages without losing any information.Īs we will see below, there are various ways of expressing entropy. The word “cross-entropy” has “cross” and “entropy” in it, and it helps to understand the “entropy” part to understand the “cross” part. If so, reading this article should help to demystify those questions. Some might have seen the binary cross-entropy and wondered whether it is fundamentally different from the cross-entropy or not. Some of us might have used cross-entropy for calculating classification losses and wondered why we use the natural logarithm. What is it? Is there any relation to the entropy concept? Why is it used for classification loss? What about the binary cross-entropy?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed